Installation on Non-Air-Gapped vSphere OpenShift Cluster

This topic provides instructions for installing Portworx on a non-air-gapped VMware vSphere OpenShift cluster using the OpenShift Container Platform web console.

The following collection of tasks describe how to install Portworx on a non-air-gapped VMware vSphere OpenShift cluster:

- Create a Monitoring ConfigMap

- Configure Storage DRS settings

- Create a vCenter user account for Portworx

- Create a secret with your vCenter user credentials

- Generate Portworx Enterprise Specification

- Install Portworx Operator using OpenShift Console

- Deploy Portworx using OpenShift Console

- Verify Portworx Cluster Status

- Verify Portworx Pod Status

Complete all the tasks to install Portworx.

Create a Monitoring ConfigMap

Enable monitoring for user-defined projects before installing the Portworx Operator. Use the instructions in this section to configure the OpenShift Prometheus deployment to monitor Portworx metrics.

To integrate OpenShift’s monitoring and alerting system with Portworx, create a cluster-monitoring-config ConfigMap in the openshift-monitoring namespace:

kind: ConfigMap

metadata:

name: cluster-monitoring-config

namespace: openshift-monitoring

data:

config.yaml: |

enableUserWorkload: true

The enableUserWorkload parameter enables monitoring for user-defined projects in the OpenShift cluster. This creates a prometheus-operated service in the openshift-user-workload-monitoring namespace.

Configure Storage DRS settings

Portworx does not support moving VMDK files from the datastores where they were originally created. Do not move these files manually or configure any settings that could result in their movement.

To prevent Storage DRS from moving VMDK files, log in to your vSphere console and configure the following settings:

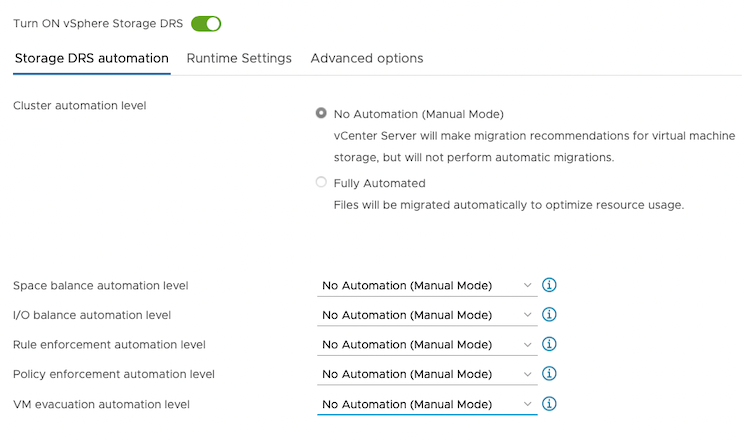

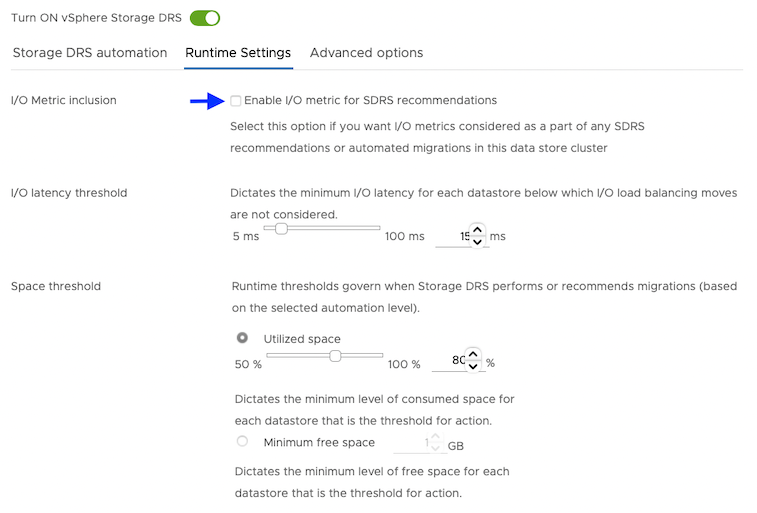

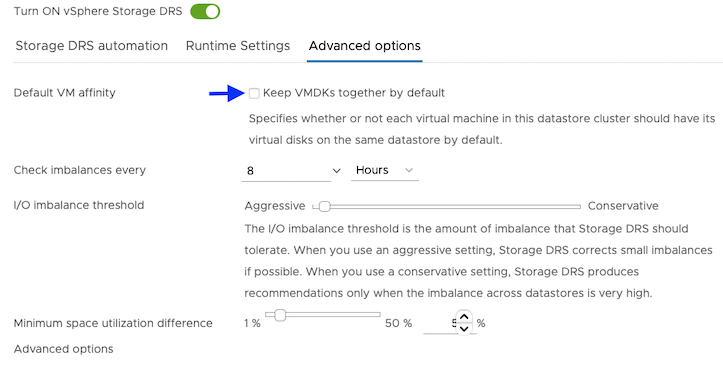

From the Edit Storage DRS Settings window of the datastore cluster, do the following:

-

From the Storage DRS automation tab, choose the No Automation (Manual Mode) option, and set the same for other settings, as shown in the following screencapture:

-

From the Runtime Settings tab, clear the Enable I/O metric for SDRS recommendations checkbox.

-

From the Advanced options tab, clear the Keep VMDKs together by default checkbox.

Create a vCenter user account for Portworx

Using your vSphere console, provide Portworx with a vCenter server user account that has the following minimum vSphere privileges at vCenter datacenter level:

-

Datastore

- Allocate space

- Browse datastore

- Low level file operations

- Remove file

-

Host

- Local operations

- Reconfigure virtual machine

- Virtual machine

-

Change Configuration

- Add existing disk

- Add new disk

- Add or remove device

- Advanced configuration

- Change Settings

- Extend virtual disk

- Modify device settings

- Remove disk

If you create a custom role as above, make sure to select Propagate to children when assigning the user to the role.

Why selectPropagate to Children?In vSphere, resources are organized hierarchically. Select Propagate to children to ensure that the permissions granted to the custom role are automatically applied not just to the targeted object, but also to all objects within its sub-tree. This includes VMs, datastores, networks, and other resources nested under the selected resource.

Create a secret with your vCenter user credentials

Create a secret using the following steps.

- Kubernetes Secret

- Vault Secret

-

Get the VCenter user and password by running the following commands:

- For

VSPHERE_USER:echo '<vcenter-server-user>' | base64 - For

VSPHERE_PASSWORD:echo '<vcenter-server-password>' | base64

- For

-

Update the following Kubernetes Secret template by using the values obtained in step 1 for

VSPHERE_USERandVSPHERE_PASSWORD.apiVersion: v1

kind: Secret

metadata:

name: px-vsphere-secret

namespace: <px-namespace>

type: Opaque

data:

VSPHERE_USER: XXXX

VSPHERE_PASSWORD: XXXX -

Apply the above spec to update the spec with your VCenter username and password:

oc apply -f <updated-secret-template.yaml>

Ensure that the ports 17001-17020 on worker nodes are reachable from the control plane node and other worker nodes.

For information on how to configure and store secret key for vSphere in Vault, see Vault Secret Provider.

- Ensure that the correct vSphere credentials are securely stored in Vault before Portworx installation.

- Ensure that the ports 17001-17020 on worker nodes are reachable from the control plane node and other worker nodes.

Generate Portworx Enterprise Specification

To install Portworx, you must first generate Kubernetes manifests that you will deploy in your vSphere Openshift cluster by following these steps.

-

Sign in to the Portworx Central console.

The system displays the Welcome to Portworx Central! page. -

In the Portworx Enterprise section, select Generate Cluster Spec.

The system displays the Generate Spec page. -

From the Portworx Version dropdown menu, select the Portworx version to install.

-

From the Platform dropdown menu, select vSphere.

-

In the vCenter Endpoint field, specify the hostname or the IP address of the vSphere server.

-

In the vCenter Datastore Prefix field, specify the datastore name(s) or datastore cluster name(s) available for Portworx.

To specify multiple datastore names or datastore cluster names, enter a generic prefix common to all the datastores or datastore clusters. For example, if you want Portworx to use three datastores namedpx-datastore-01,px-datastore-02, andpx-datastore-03, specifypxorpx-datastore. -

From the Distribution Name dropdown menu, select Openshift 4+.

-

(Optional) To customize the configuration options and generate a custom specification, click Customize and perform the following steps:

noteTo continue without customizing the default configuration or generating a custom specification, proceed to Step 9.

- Basic tab:

- To use an existing etcd cluster, do the following:

- Select the Your etcd details option.

- In the field provided, enter the host name or IP and port number. For example,

http://test.com.net:1234.

To add another etcd cluster, click the + icon.noteYou can add up to three etcd clusters.

- Select one of the following authentication methods:

- Disable HTTPS – To use HTTP for etcd communication.

- Certificate Auth – To use HTTPS with an SSL certificate.

For more information, see Secure your etcd communication. - Password Auth – To use HTTPS with username and password authentication.

- To use an internal Portworx-managed key-value store (kvdb), do the following:

- Select the Built-in option.

- To enable TLS encrypted communication among KVDB nodes and between Portworx nodes and the KVDB cluster, select the Enable TLS for internal kvdb checkbox.

- If your cluster does not already have a cert-manager, select the Deploy Cert-Manager for TLS certificates checkbox.

- Select Next.

- To use an existing etcd cluster, do the following:

- Storage tab:

- To enable Portworx to provision drives using a specification, do the following:

- Select the Create Using a Spec option.

- Select PX-Store Version: (Optional) To designate PX-StoreV1 as the datastore, select PX-StoreV1. By default, the system selects PX-StoreV2 as the datastore.

- To add one or more storage drive types for Portworx to use, click + Add Drive and select one of the following types of drives:

- Lazy-Zeroed Thick

- Eager-Zeroed Thick

- Thin

noteThe system automatically selects the minimum number of drives to ensure optimal performance.

- Configure the following fields for the drive:

- Size (GB) - Specify the size of the drive in gigabytes.

- Action - Use the trash icon to remove a drive type from the configuration.

- (Optional) To add more storage drives, click one of the following options based on the drive type:

- + Add Lazy-Zeroed Thick Drives

- + Add Eager-Zeroed Thick Drives

- + Add Thin Drives

- Initial Storage Nodes (Optional): Enter the number of storage nodes that need to be created across zones and node pools.

- From the Default IO Profile dropdown menu, select Auto.

This enables Portworx to automatically choose the best I/O profile based on detected workload patterns. - From the Journal Device dropdown menu, select one of the following:

- None – To use the default journaling setting.

- Auto – To automatically allocate journal devices.

- Custom – To manually choose a volume type for the journal device.

- To enable Portworx to use all available, unused, and unmounted drives on the node, do the following:

- Select the Consume Unused option.

- (Optional) To designate PX-StoreV1 as the datastore, clear the PX-StoreV2 checkbox. By default, the system selects PX-StoreV2 as the datastore.

- For PX-StoreV2, in the Metadata Path field, enter a pre-provisioned path for storing the Portworx metadata.

The path must be at least 64 GB in size. - From the Journal Device dropdown menu, select one of the following:

- None – To use the default journaling setting.

- Auto – To automatically allocate journal devices.

- Custom – To manually enter a journal device path.

Enter the path of the journal device in the Journal Device Path field.

- Select the Use unmounted disks even if they have a partition or filesystem on it. Portworx will never use a drive or partition that is mounted checkbox to use unmounted disks, even if they contain a partition or filesystem.

Portworx will not use any mounted drive or partition.

- To enable Portworx to use existing drives on a node, do the following:

- Select the Use Existing Drives option.

- (Optional) To designate PX-StoreV1 as the datastore, clear the PX-StoreV2 checkbox. By default, the system selects PX-StoreV2 as the datastore.

- For PX-StoreV2, in the Metadata Path field, enter a pre-provisioned path for storing the Portworx metadata.

The path must be at least 64 GB in size. - In the Drive/Device field, specify the block drive(s) that Portworx uses for data storage.

To add another block drive, click the + icon. - (Optional) In the Pool Label field, assign a custom label in key:value format to identify and categorize storage pools.

For more information refer to How to assign custom labels to device pools. - From the Journal Device dropdown menu, select one of the following:

- None – To use the default journaling setting.

- Auto – To automatically allocate journal devices.

- Custom – To manually enter a journal device path.

Enter the path of the journal device in the Journal Device Path field.

- Select Next.

- To enable Portworx to provision drives using a specification, do the following:

- Network tab:

- In the Interface(s) section, do the following:

- Enter the Data Network Interface to be used for data traffic.

- Enter the Management Network Interface to be used for management traffic.

- In the Advanced Settings section, do the following:

- Enter the Starting port for Portworx services.

- Select Next.

- In the Interface(s) section, do the following:

- Deployment tab:

- In the Kubernetes Distribution section, under Are you running on either of these?, select Openshift 4+.

- In the Component Settings section:

- Select the Enable Stork checkbox to enable Stork.

- Select the Enable Monitoring checkbox to enable Prometheus-based monitoring of Portworx components and resources.

- To configure how Prometheus is deployed and managed in your cluster, choose one of the following:

- Portworx Managed - To enable Portworx to install and manage Prometheus and Operator automatically.

Ensure that no another Prometheus Operator instance already running on the cluster. - User Managed - To manage your own Prometheus stack.

You must enter a valid URL of the Prometheus instance in the Prometheus URL field.

- Portworx Managed - To enable Portworx to install and manage Prometheus and Operator automatically.

- Select the Enable Autopilot checkbox to enable Portworx Autopilot.

For more information on Autopilot, see Expanding your Storage Pool with Autopilot. - Select the Enable Telemetry checkbox to enable telemetry in the StorageCluster spec.

For more information, see Enable Pure1 integration for upgrades on a VMware vSphere cluster. - Enter the prefix for the Portworx cluster name in the Cluster Name Prefix field.

- Select the Secrets Store Type from the dropdown menu to store and manage secure information for features such as CloudSnaps and Encryption.

- In the Environment Variables section, enter name-value pairs in the respective fields.

- In the Registry and Image Settings section:

- Enter the Custom Container Registry Location to download the Docker images.

- Enter the Kubernetes Docker Registry Secret that serves as the authentication to access the custom container registry.

- From the Image Pull Policy dropdown menu, select Default, Always, IfNotPresent, or Never.

This policy influences how images are managed on the node and when updates are applied.

- In the Security Settings section, select the Enable Authorization checkbox to enable Role-Based Access Control (RBAC) and secure access to storage resources in your cluster.

- Click Finish.

- In the summary page, enter a name for the specification in the Spec Name field, and tags in the Spec Tags field.

- Click Download .yaml to download the yaml file with the customized specification or Save Spec to save the specification.

- Click Save & Download to generate the specification.

Install Portworx Operator using OpenShift Console

-

Sign in to the OpenShift Container Platform web console.

-

Search for the Portworx Enterprise Operator:

- OCP version 4.20 or later:

From the OpenShift UI, go to Ecosystem > Software Catalog. From Project dropdown, select Create Project to create a new project. Enter a name for new project and select that project. Search for Portworx Enterprise, and the Portworx Enterprise page appears. - OCP version 4.19 or earlier:

From the OpenShift UI, go to OperatorHub, search for Portworx Enterprise, and the Portworx Enterprise page appears.

- OCP version 4.20 or later:

-

Click Install.

The system initiates the Portworx Operator installation and displays the Install Operator page. -

In the Installation mode section, select A specific namespace on the cluster.

-

From the Installed Namespace dropdown, choose Create Project.

The system displays the Create Project window. -

Provide the name

portworxand click Create to create a namespace called portworx. -

In the Console plugin section, select Enable to manage your Portworx cluster using the Portworx dashboard within the OpenShift console.

noteIf the Portworx Operator is installed but the OpenShift Console plugin is not enabled, or was previously disabled, you can re-enable it by running the following command.

oc patch console.operator cluster --type=json -p='[{"op":"add","path":"/spec/plugins/-","value":"portworx"}]' -

Click Install to deploy Portworx Operator in the

portworxnamespace.

After you successfully install Portworx Operator, the system displays the Create StorageCluster option.

Deploy Portworx using OpenShift Console

-

Click Create StorageCluster.

The system displays the Create StorageCluster page. -

Select YAML view.

-

Copy and paste the specification that you generated in the Generate Portworx Enterprise Specification section into the text editor.

-

Click Create.

The system deploys Portworx, and displays the Portworx instance in the Storage Cluster tab of the Installed Operators page. Refresh your browser to see the Portworx option in the left navigation pane. Click the Cluster tab to access the Portworx dashboard.noteFor clusters with PX-StoreV2 datastores, after you deploy Portworx, the Portworx Operator performs a pre-flight check across the cluster, and the check must pass on each node. This check determines whether each node in the cluster is compatible with the PX-StoreV2 datastore. If each node meets the minimum requirements for PX-StoreV2, PX-StoreV2 is automatically set as the default datastore during Portworx installation.

Verify Portworx Cluster Status

After you install Portworx Operator and create the StorageCluster, you can see the Portworx option in the left pane of the OpenShift UI.

- Click the Cluster sub-tab to view the Portworx dashboard. If Portworx is installed correctly, the status will be displayed as Running. You can also see the information about the status of Telemetry, Monitoring, and the version of Portworx and its components installed in your cluster.

- Navigate to the Node Summary section. If your cluster is running as intended, the status of all Portworx nodes displays Online.

Verify Portworx Pod Status

- From the left pane of the OpenShift UI, click Pods under the Workload option.

- To check the status of all pods in the

portworxnamespace, select portworx from the Project drop-down. If Portworx is installed correctly, then all pods should be in Running status.

What to do next

Create a PVC. For more information, see Create your first PVC.