Install Portworx on Spectro Cloud

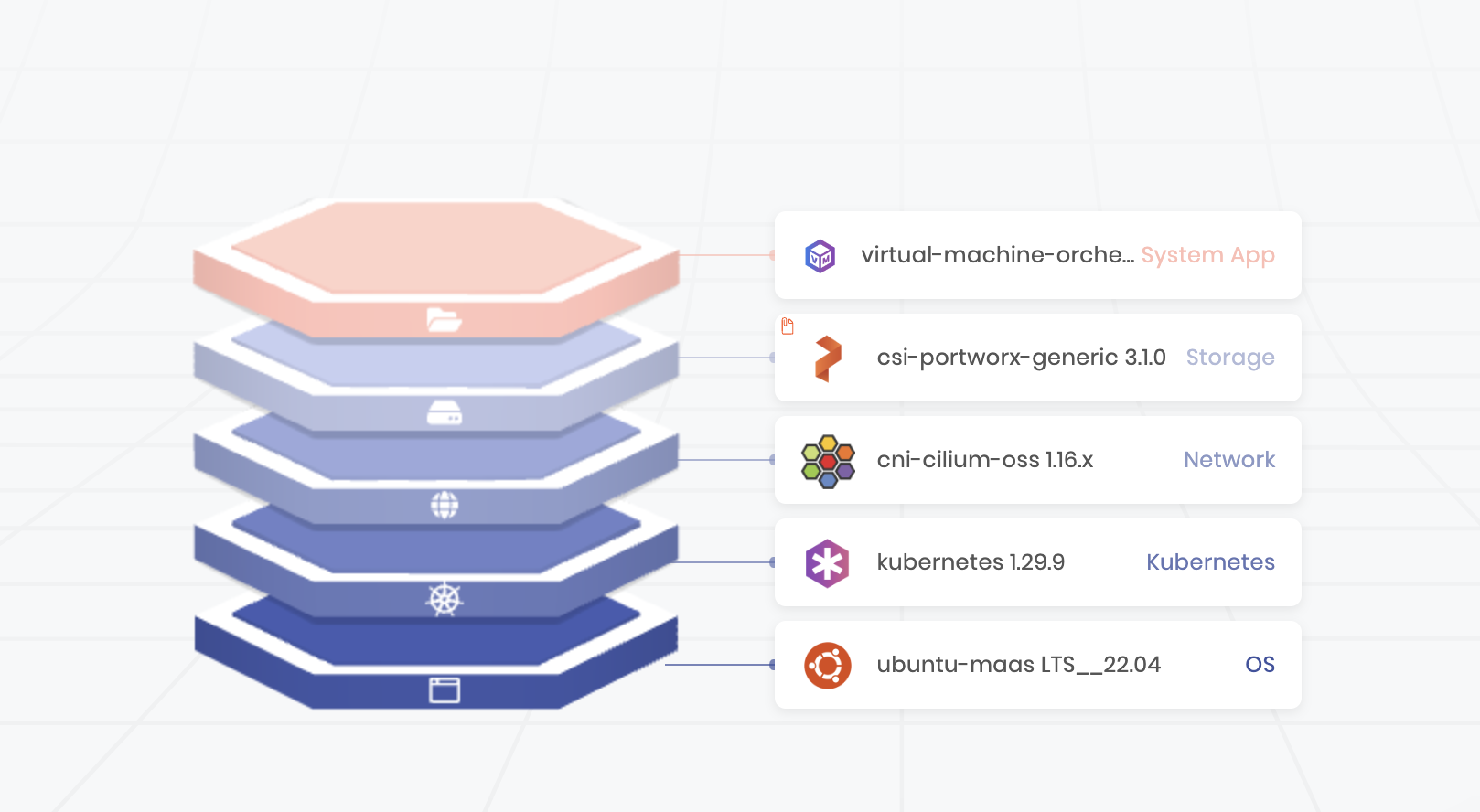

To install Portworx on Spectro Cloud, you need to prepare the Cluster Profile template to customize the Operating System Layer. After configuration, the cluster profile layers will look similar to the following.

Note: The Network, System App, and other layers, can differ based on your configuration. For more information on Cluster profiles, refer to the Spectro Cloud documentation.

Before you begin

-

Ensure your cluster meets the Portworx prerequisites for Spectro Cloud.

-

This guide assume your infrastructure provider is Canonical Metal as a Service (MAAS) on Spectro Cloud and specifies using a Storage array like Pure Storage FlashArray with pre-provisioned volumes attached as LUNs over iSCSI to Portworx nodes.

However, other storage options are also supported:

- Using a Pure Storage FlashArray is not required. Other external storage arrays with pre-provisioned volumes or local disks within the servers can also be used.

- When using an external storage array (such as FlashArray or others), iSCSI is not mandatory—Fibre Channel (FC) can also be used for connectivity.

- When using FlashArrays specifically, cloud disks are used instead of pre-provisioned volumes.

For a list of infrastructure providers supported by Spectro Cloud, refer Creating clusters on Palette.

-

To prepare Bare-Metal Cluster API (CAPI) clusters, refer to the Reliable Distributed Storage for Bare-Metal CAPI Clusters blog post. It covers essential prerequisites:

- Configure static IPs on all nodes.

- Remove all disks from the MAAS configuration to prevent data loss during repaving.

- Disabling the “Erase nodes disks prior to releasing” option.

Follow the blog for additional preparation steps.

These installation steps target a specific use case. If they don't match your requirements, follow the Bare Metal Install Guide or the FlashArray Direct Access guide for a more generic installation approach.

Prepare your Cluster Profile

To configure your new cluster profile:

- Select the MAAS infrastructure provider in cloud type of the new cluster profile.

- Choose Ubuntu Base OS Pack as the base OS pack. This pack requires the following additional customizations:

These steps are essential only if your Portworx nodes use iSCSI to connect to a backend storage array. If the nodes rely solely on locally attached drives, this configuration is not necessary. For more details about repaving behavior and configuration, refer to the Repave Behavior and Configuration documentation.

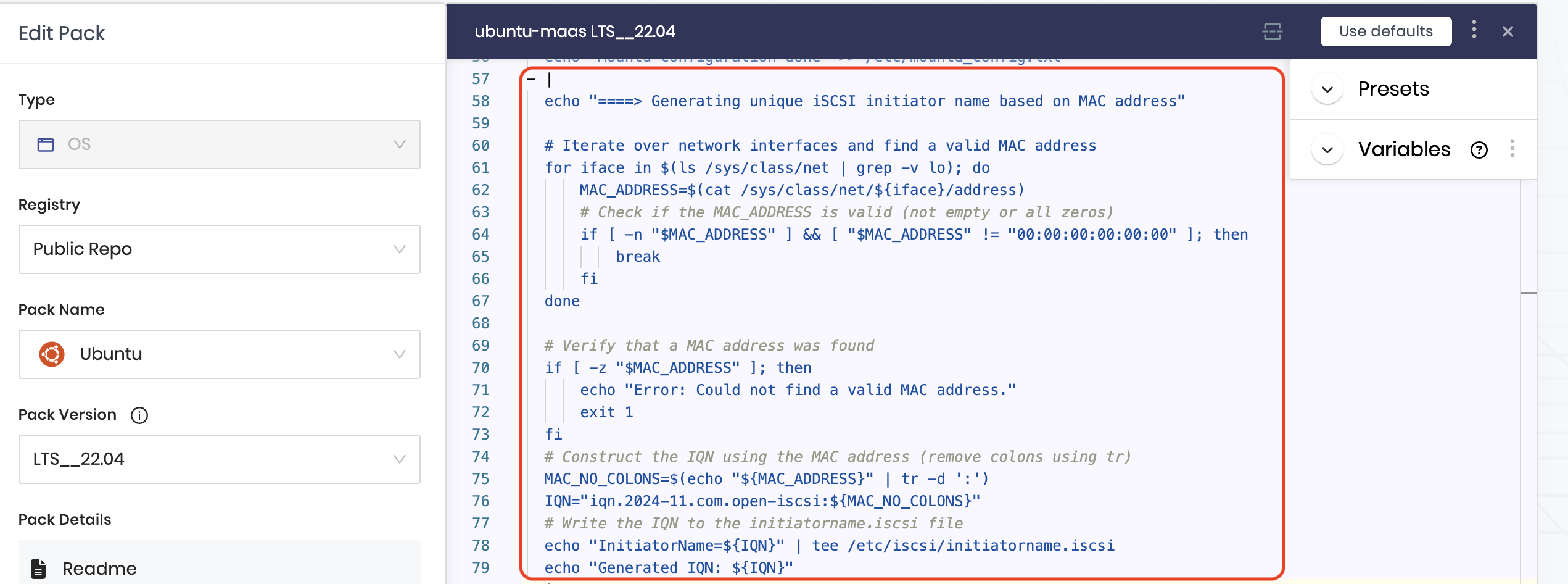

Configuring OS Pack: Edit values under pack details for Portworx to work.

-

Unique iSCSI initiator name that doesn't change.

To ensure the iSCSI Initiator name remains consistent across reboots or node repaving, you need to implement a script that generates a unique iSCSI initiator name. This script can utilize the MAC address of the node’s active network interface, as shown in the example screenshot, or any other method such as the hostname or a different MAC address. The key requirement is that the method ensures a unique and persistent initiator name for the node.

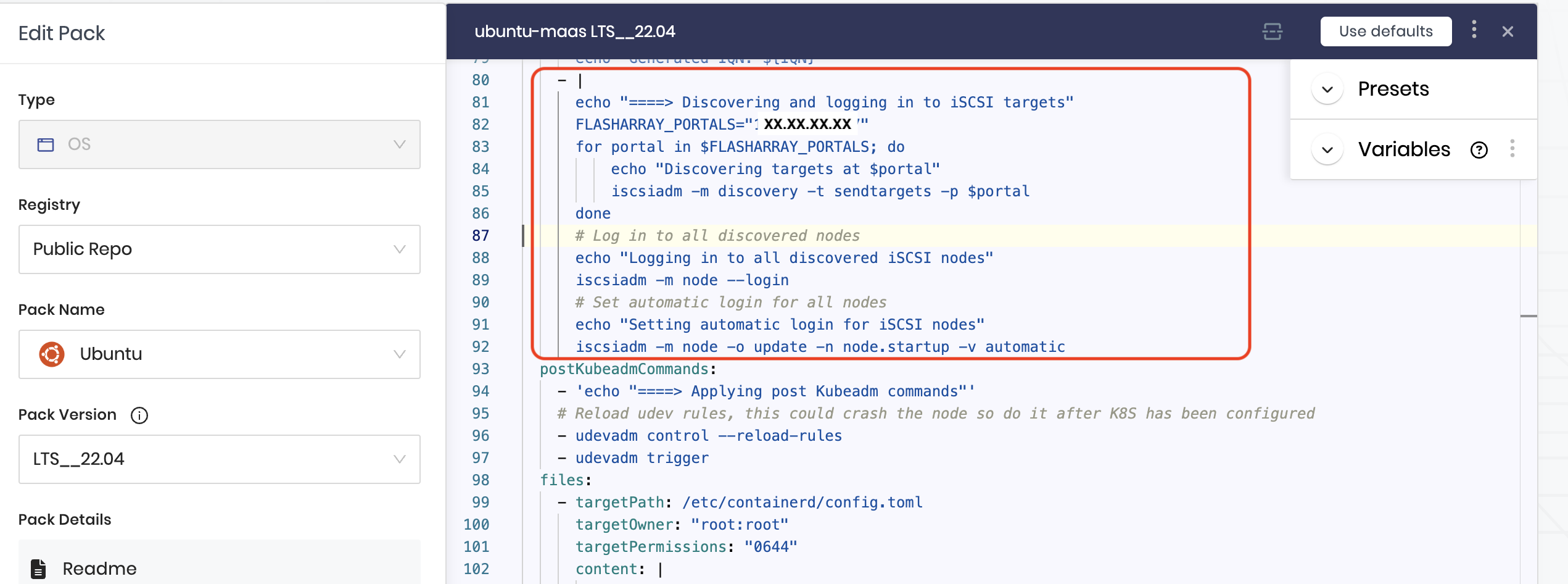

-

Ensure node undergoes iSCSI discovery and login process before Portworx comes up on node.

Once the iSCSI initiator names are generated, each node must discover and log in to the iSCSI targets. To configure this, provide the FlashArray Portal IP using the variable

FLASHARRAY_PORTALS=XX.XX.XX.XX, as shown in the image. This step ensures that iSCSI discovery and login to iSCSI paths are re-established after a node repave, allowing Portworx to connect to the storage backend before starting.

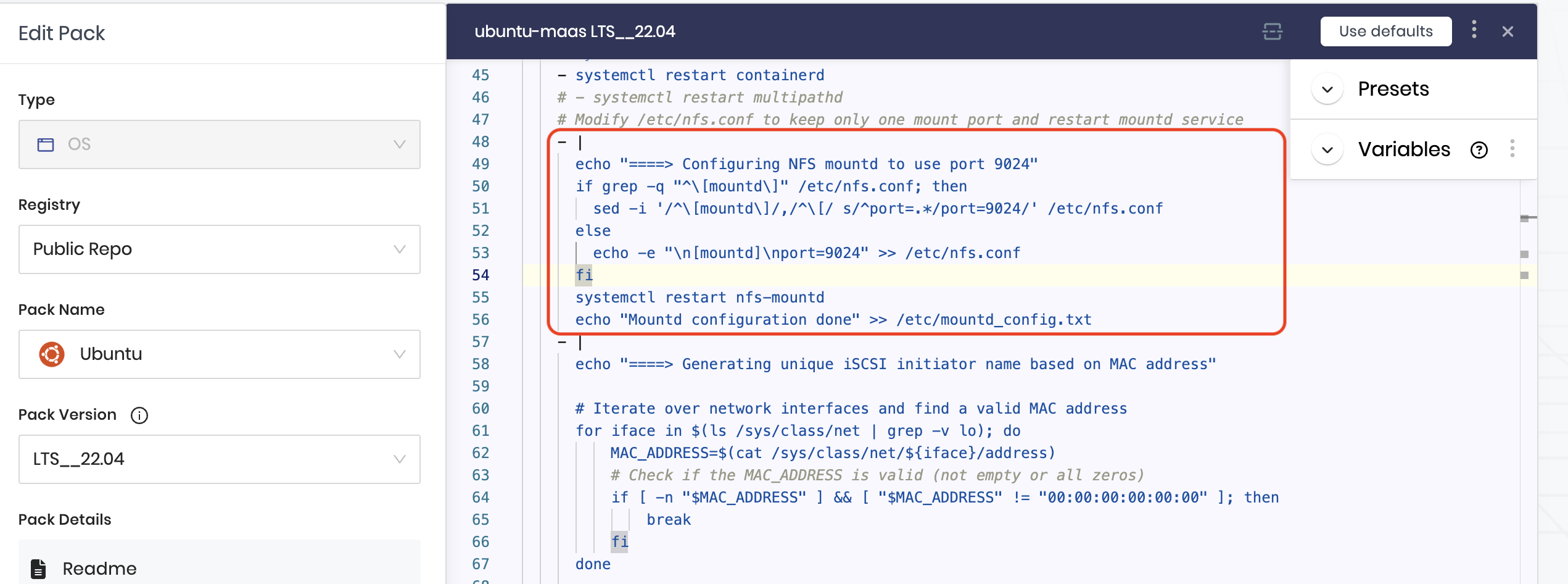

-

Ensure to keep only one NFS mount port.

To ensure NFS operates on a consistent port, configure it to prevent mounts on random or varying ports, which can occur in some OS distributions or if using NFSv3. This configuration is essential because Portworx SharedV4 volumes rely on NFS ports for communication, regardless of whether a storage array backend is used. For more details, see the Portworx documentation on opening NFS ports.

-

Configure Kubernetes and CNI layers in the profile. For more information, refer Create Infrastructure Profile.

Install Portworx

In the Storage layer, select Portworx as the base storage pack.

Configure Portworx Pack.

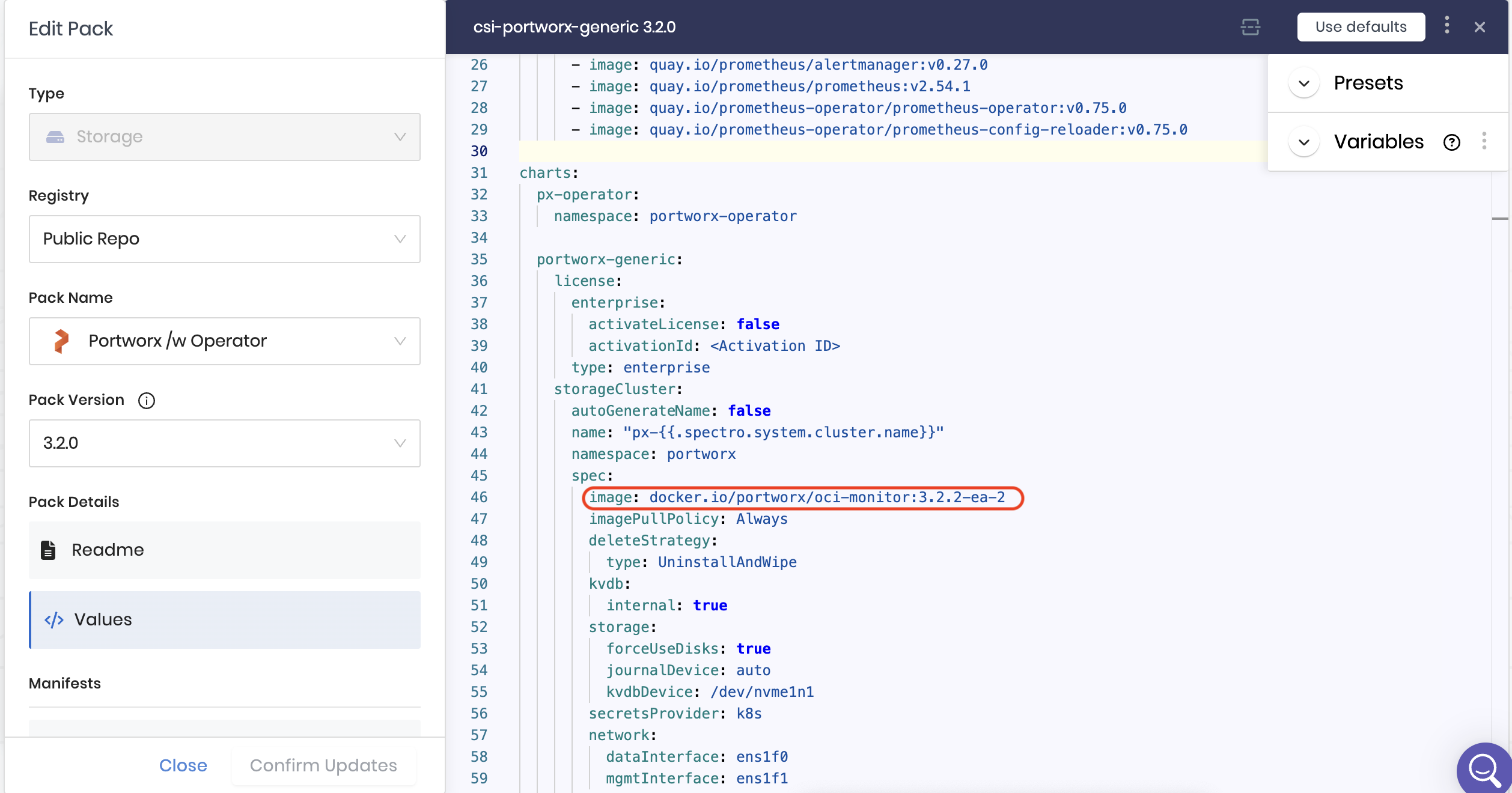

The Portworx /w Operator pack provides you with options to choose the Pack Version from the left sidebar. You can configure the version of Portworx by changing the image tag associated with the image below in the values under Pack Details in the left sidebar:

When overriding the image version, you should only change .z versions (patch versions) within the same .y release. For example, if you are using the 3.2.0 pack, you can deploy 3.2.1 or 3.2.2, but you should not use it to deploy 3.3.0 or any other .y version upgrade.

You should be able to find those image names under the Values.

- Generate the StorageCluster from the PX Central UI. For additional configuration, refer to the Storage Specification section and the StorageCluster CRD.

- For detailed instructions on configuring Secrets and StorageClass parameters, refer to the operations section.

- If you need to configure IAM permissions for example for AWS refer to the Storage specification section on Spectro Cloud docs

- To configure Prometheus URL for Autopilot, follow Install and Setup page.

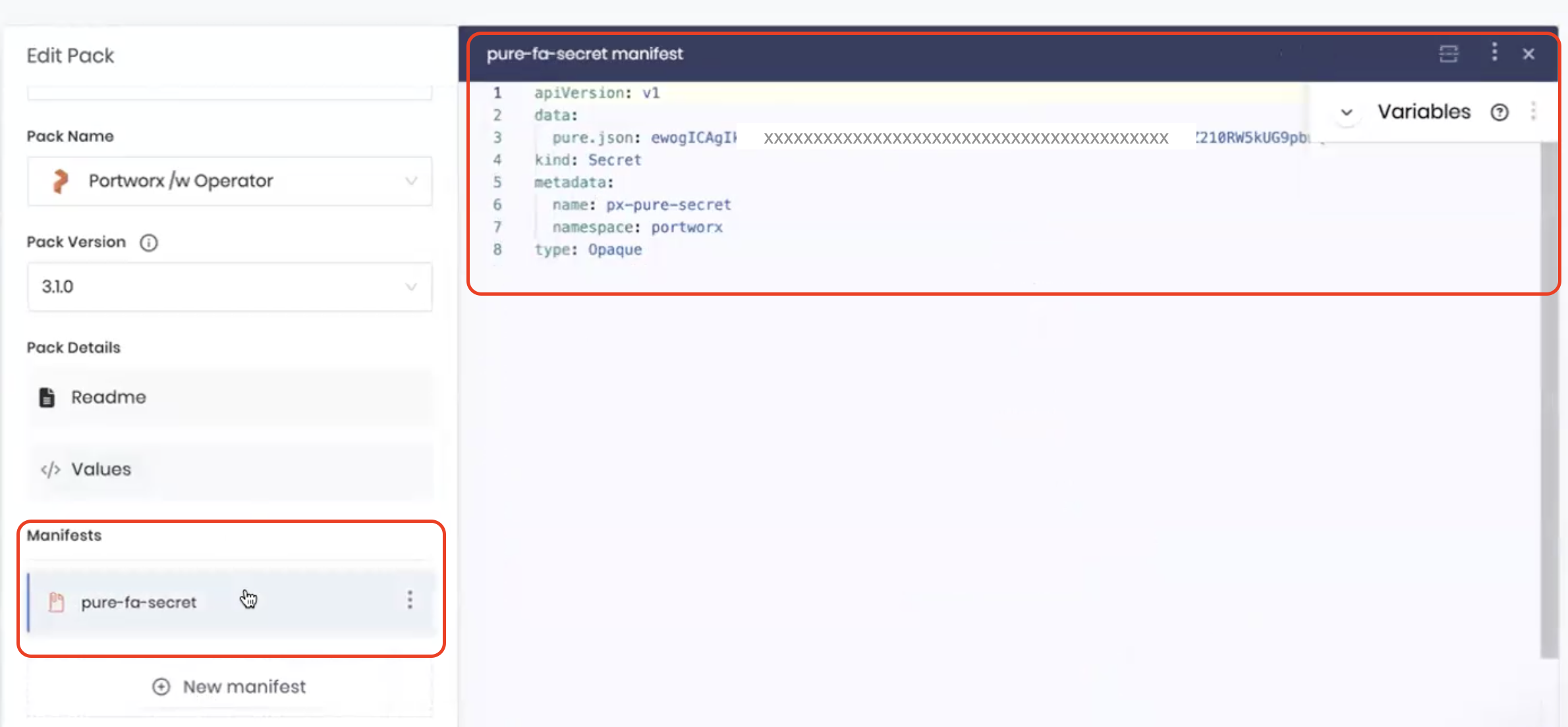

Add Pure Secrets Manifest

Create a secrets manifest to enable access to Pure FlashArray and attach it to the Portworx pack.

Refer Create a JSON configuration file to enable Portworx to integrate with FlashArray.

Once complete, select the next layer and finish up the cluster profile creation.

Deploy Cluster Profile

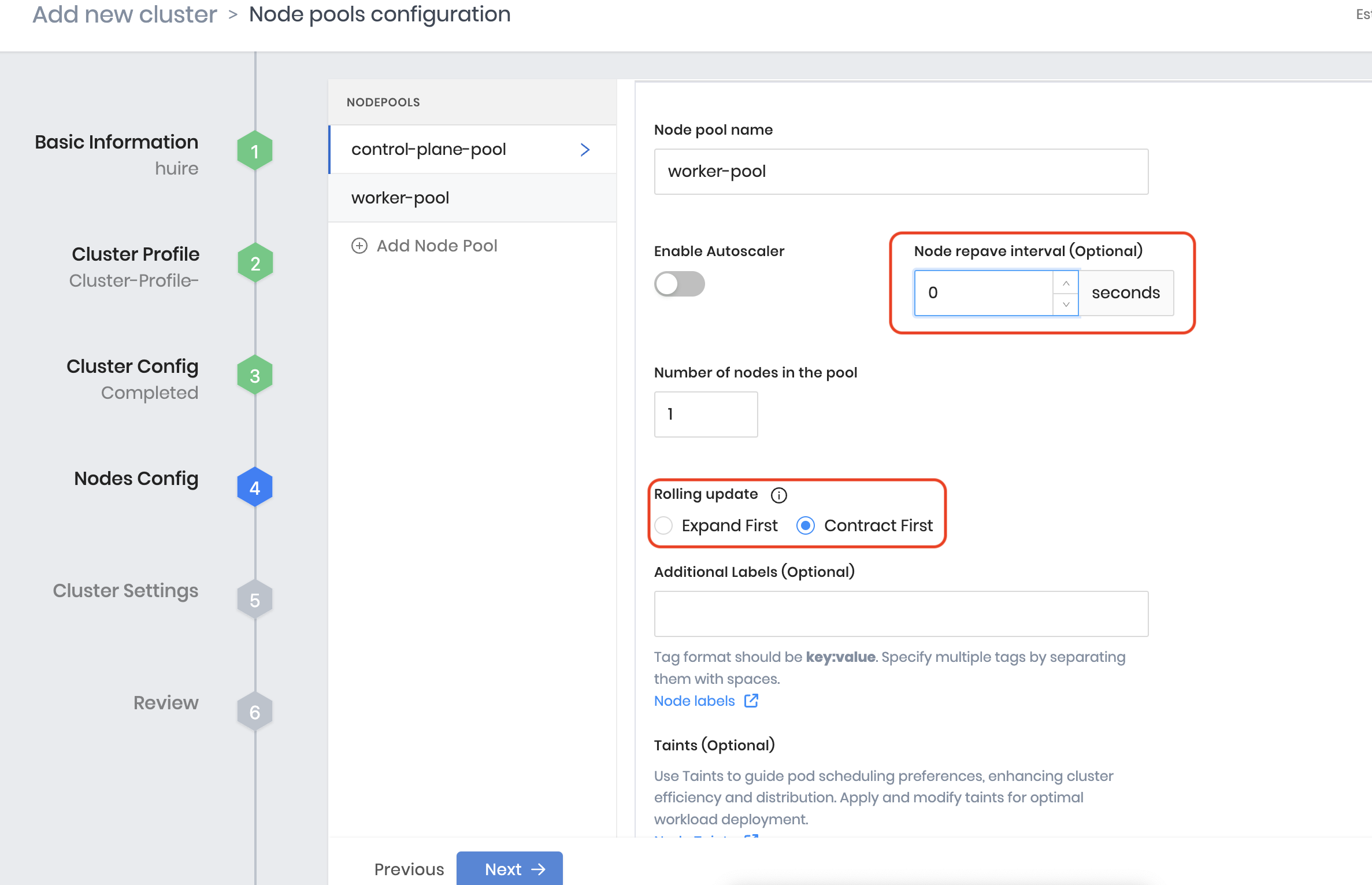

During deployment, configure the worker pool:

Set Node Repave interval to a minimum of 10 min i.e., 600 seconds at least, and select Rolling Update to Contract First.

[Optional] Create an add-on Pack for Virtual Machine Orchestrator (VMO)

Palette Virtual Machine Orchestrator (VMO) provides a unified platform for deploying, managing, and scaling Virtual Machines (VMs) and containerized applications within Kubernetes clusters. To create an add-on pack, refer Add Pack to Add-on Profile.

For more information on VMO, refer the Virtual Machine Orchestrator page on the Spectro Cloud documentation.

Verify your Portworx installation

Once you've installed Portworx, you can perform the following tasks to verify that Portworx has installed correctly.

Verify if all pods are running

Enter the following kubectl get pods command to list and filter the results for Portworx pods:

kubectl get pods -n <px-namespace> -o wide | grep -e portworx -e px

portworx-api-774c2 1/1 Running 0 2m55s 192.168.121.196 username-k8s1-node0 <none> <none>

portworx-api-t4lf9 1/1 Running 0 2m55s 192.168.121.99 username-k8s1-node1 <none> <none>

portworx-api-dvw64 1/1 Running 0 2m55s 192.168.121.99 username-k8s1-node2 <none> <none>

portworx-kvdb-94bpk 1/1 Running 0 4s 192.168.121.196 username-k8s1-node0 <none> <none>

portworx-kvdb-8b67l 1/1 Running 0 10s 192.168.121.196 username-k8s1-node1 <none> <none>

portworx-kvdb-fj72p 1/1 Running 0 30s 192.168.121.196 username-k8s1-node2 <none> <none>

portworx-operator-58967ddd6d-kmz6c 1/1 Running 0 4m1s 10.244.1.99 username-k8s1-node0 <none> <none>

prometheus-px-prometheus-0 2/2 Running 0 2m41s 10.244.1.105 username-k8s1-node0 <none> <none>

px-cluster-xxxxxxxx-xxxx-xxxx-xxxx-3e9bf3cd834d-9gs79 2/2 Running 0 2m55s 192.168.121.196 username-k8s1-node0 <none> <none>

px-cluster-xxxxxxxx-xxxx-xxxx-xxxx-3e9bf3cd834d-vpptx 2/2 Running 0 2m55s 192.168.121.99 username-k8s1-node1 <none> <none>

px-cluster-xxxxxxxx-xxxx-xxxx-xxxx-3e9bf3cd834d-bxmpn 2/2 Running 0 2m55s 192.168.121.191 username-k8s1-node2 <none> <none>

px-csi-ext-868fcb9fc6-54bmc 4/4 Running 0 3m5s 10.244.1.103 username-k8s1-node0 <none> <none>

px-csi-ext-868fcb9fc6-8tk79 4/4 Running 0 3m5s 10.244.1.102 username-k8s1-node2 <none> <none>

px-csi-ext-868fcb9fc6-vbqzk 4/4 Running 0 3m5s 10.244.3.107 username-k8s1-node1 <none> <none>

px-prometheus-operator-59b98b5897-9nwfv 1/1 Running 0 3m3s 10.244.1.104 username-k8s1-node0 <none> <none>

Note the name of one of your px-cluster pods. You'll run pxctl commands from these pods in following steps.

Verify Portworx cluster status

You can find the status of the Portworx cluster by running pxctl status commands from a pod. Enter the following kubectl exec command, specifying the pod name you retrieved in the previous section:

kubectl exec <pod-name> -n <px-namespace> -- /opt/pwx/bin/pxctl status

Defaulted container "portworx" out of: portworx, csi-node-driver-registrar

Status: PX is operational

Telemetry: Disabled or Unhealthy

Metering: Disabled or Unhealthy

License: Trial (expires in 31 days)

Node ID: xxxxxxxx-xxxx-xxxx-xxxx-70c31d0f478e

IP: 192.168.121.99

Local Storage Pool: 1 pool

POOL IO_PRIORITY RAID_LEVEL USABLE USED STATUS ZONE REGION

0 HIGH raid0 3.0 TiB 10 GiB Online default default

Local Storage Devices: 3 devices

Device Path Media Type Size Last-Scan

0:1 /dev/vdb STORAGE_MEDIUM_MAGNETIC 1.0 TiB 14 Jul 22 22:03 UTC

0:2 /dev/vdc STORAGE_MEDIUM_MAGNETIC 1.0 TiB 14 Jul 22 22:03 UTC

0:3 /dev/vdd STORAGE_MEDIUM_MAGNETIC 1.0 TiB 14 Jul 22 22:03 UTC

* Internal kvdb on this node is sharing this storage device /dev/vdc to store its data.

total - 3.0 TiB

Cache Devices:

* No cache devices

Cluster Summary

Cluster ID: px-cluster-xxxxxxxx-xxxx-xxxx-xxxx-3e9bf3cd834d

Cluster UUID: xxxxxxxx-xxxx-xxxx-xxxx-6f3fd5522eae

Scheduler: kubernetes

Nodes: 3 node(s) with storage (3 online)

IP ID SchedulerNodeName Auth StorageNode Used Capacity Status StorageStatus Version Kernel OS

192.168.121.196 xxxxxxxx-xxxx-xxxx-xxxx-fad8c65b8edc username-k8s1-node0 Disabled Yes 10 GiB 3.0 TiB Online Up 2.11.0-81faacc 3.10.0-1127.el7.x86_64 CentOS Linux 7 (Core)

192.168.121.99 xxxxxxxx-xxxx-xxxx-xxxx-70c31d0f478e username-k8s1-node1 Disabled Yes 10 GiB 3.0 TiB Online Up (This node) 2.11.0-81faacc 3.10.0-1127.el7.x86_64 CentOS Linux 7 (Core)

192.168.121.191 xxxxxxxx-xxxx-xxxx-xxxx-19d45b4c541a username-k8s1-node2 Disabled Yes 10 GiB 3.0 TiB Online Up 2.11.0-81faacc 3.10.0-1127.el7.x86_64 CentOS Linux 7 (Core)

Global Storage Pool

Total Used : 30 GiB

Total Capacity : 9.0 TiB

The Portworx status will display PX is operational if your cluster is running as intended.

Verify pxctl cluster provision status

-

Find the storage cluster, the status should show as

Online:kubectl -n <px-namespace> get storageclusterNAME CLUSTER UUID STATUS VERSION AGE

px-cluster-xxxxxxxx-xxxx-xxxx-xxxx-3e9bf3cd834d xxxxxxxx-xxxx-xxxx-xxxx-6f3fd5522eae Online 2.11.0 10m -

Find the storage nodes, the statuses should show as

Online:kubectl -n <px-namespace> get storagenodesNAME ID STATUS VERSION AGE

username-k8s1-node0 xxxxxxxx-xxxx-xxxx-xxxx-fad8c65b8edc Online 2.11.0-81faacc 11m

username-k8s1-node1 xxxxxxxx-xxxx-xxxx-xxxx-70c31d0f478e Online 2.11.0-81faacc 11m

username-k8s1-node2 xxxxxxxx-xxxx-xxxx-xxxx-19d45b4c541a Online 2.11.0-81faacc 11m -

Verify the Portworx cluster provision status. Enter the following

kubectl execcommand, specifying the pod name you retrieved in the previous section:kubectl exec <pod-name> -n <px-namespace> -- /opt/pwx/bin/pxctl cluster provision-statusDefaulted container "portworx" out of: portworx, csi-node-driver-registrar

NODE NODE STATUS POOL POOL STATUS IO_PRIORITY SIZE AVAILABLE USED PROVISIONED ZONE REGION RACK

xxxxxxxx-xxxx-xxxx-xxxx-70c31d0f478e Up 0 ( xxxxxxxx-xxxx-xxxx-xxxx-4d74ecc7e159 ) Online HIGH 3.0 TiB 3.0 TiB 10 GiB 0 B default default default

xxxxxxxx-xxxx-xxxx-xxxx-fad8c65b8edc Up 0 ( xxxxxxxx-xxxx-xxxx-xxxx-97e4359e57c0 ) Online HIGH 3.0 TiB 3.0 TiB 10 GiB 0 B default default default

xxxxxxxx-xxxx-xxxx-xxxx-19d45b4c541a Up 0 ( xxxxxxxx-xxxx-xxxx-xxxx-8904cab0e019 ) Online HIGH 3.0 TiB 3.0 TiB 10 GiB 0 B default default default

Create your first PVC

For your apps to use persistent volumes powered by Portworx, you must use a StorageClass that references Portworx as the provisioner. Portworx includes a number of default StorageClasses, which you can reference with PersistentVolumeClaims (PVCs) you create. For a more general overview of how storage works within Kubernetes, refer to the Persistent Volumes section of the Kubernetes documentation.

Perform the following steps to create a PVC:

-

Create a PVC referencing the

px-csi-dbdefault StorageClass and save the file:kind: PersistentVolumeClaim

apiVersion: v1

metadata:

name: px-check-pvc

spec:

storageClassName: px-csi-db

accessModes:

- ReadWriteOnce

resources:

requests:

storage: 2Gi -

Run the

kubectl applycommand to create a PVC:kubectl apply -f <your-pvc-name>.yamlpersistentvolumeclaim/example-pvc created

Verify your StorageClass and PVC

-

Enter the

kubectl get storageclasscommand:kubectl get storageclassNAME PROVISIONER RECLAIMPOLICY VOLUMEBINDINGMODE ALLOWVOLUMEEXPANSION AGE

px-csi-db pxd.portworx.com Delete Immediate true 43d

px-csi-db-cloud-snapshot pxd.portworx.com Delete Immediate true 43d

px-csi-db-cloud-snapshot-encrypted pxd.portworx.com Delete Immediate true 43d

px-csi-db-encrypted pxd.portworx.com Delete Immediate true 43d

px-csi-db-local-snapshot pxd.portworx.com Delete Immediate true 43d

px-csi-db-local-snapshot-encrypted pxd.portworx.com Delete Immediate true 43d

px-csi-replicated pxd.portworx.com Delete Immediate true 43d

px-csi-replicated-encrypted pxd.portworx.com Delete Immediate true 43d

px-db kubernetes.io/portworx-volume Delete Immediate true 43d

px-db-cloud-snapshot kubernetes.io/portworx-volume Delete Immediate true 43d

px-db-cloud-snapshot-encrypted kubernetes.io/portworx-volume Delete Immediate true 43d

px-db-encrypted kubernetes.io/portworx-volume Delete Immediate true 43d

px-db-local-snapshot kubernetes.io/portworx-volume Delete Immediate true 43d

px-db-local-snapshot-encrypted kubernetes.io/portworx-volume Delete Immediate true 43d

px-replicated kubernetes.io/portworx-volume Delete Immediate true 43d

px-replicated-encrypted kubernetes.io/portworx-volume Delete Immediate true 43d

stork-snapshot-sc stork-snapshot Delete Immediate true 43dkubectlreturns details about the StorageClasses available to you. Verify thatpx-csi-dbappears in the list. -

Enter the

kubectl get pvccommand. If this is the only StorageClass and PVC that you've created, you should see only one entry in the output:kubectl get pvc <your-pvc-name>NAME STATUS VOLUME CAPACITY ACCESS MODES STORAGECLASS AGE

example-pvc Bound pvc-xxxxxxxx-xxxx-xxxx-xxxx-2377767c8ce0 2Gi RWO example-storageclass 3m7s

kubectl displays details about your PVC if it was created correctly. Verify that the configuration details match your expectations.

-

After verifying, delete the PVC created in the earlier steps.

kubectl delete pvc <your-pvc-name>persistentvolumeclaim "px-check-pvc" deleted