Install Portworx on OpenShift on vSphere using Helm

You can deploy Operator, Portworx Enterprise, and Stork using the Portworx helm chart. Before proceeding to the installation, ensure you have fulfilled the prerequisites. For detailed information, please refer to the prerequisites section.

Prerequisites

- Your cluster must be running OpenShift 4 or higher.

- You must have an OpenShift cluster deployed on infrastructure that meets the minimum requirements for Portworx.

- Any underlying nodes used for Portworx in OCP(OpenShift Container Platform) should have Secure Boot disabled.

- You must have supported disk types.

- You must have Helm installed on the client machine. For information on how to install Helm, see Installing Helm.

- Review the Helm compatibility matrix and the configurable parameters.

Create a monitoring ConfigMap

Newer OpenShift versions do not support the Portworx Prometheus deployment. As a result, you must enable monitoring for user-defined projects before installing the Portworx Operator. Use the instructions in this section to configure the OpenShift Prometheus deployment to monitor Portworx metrics.

To integrate OpenShift’s monitoring and alerting system with Portworx, create a cluster-monitoring-config ConfigMap in the openshift-monitoring namespace:

apiVersion: v1

kind: ConfigMap

metadata:

name: cluster-monitoring-config

namespace: openshift-monitoring

data:

config.yaml: |

enableUserWorkload: true

The enableUserWorkload parameter enables monitoring for user-defined projects in the OpenShift cluster. This creates a prometheus-operated service in the openshift-user-workload-monitoring namespace.

Configure Storage DRS settings

Portworx does not support the movement of VMDK files from the datastores on which they were created.

Do not move them manually or have any settings that would result in a movement of these files.

To prevent Storage DRS from moving VMDK files, configure the Storage DRS settings as follows using your vSphere console.

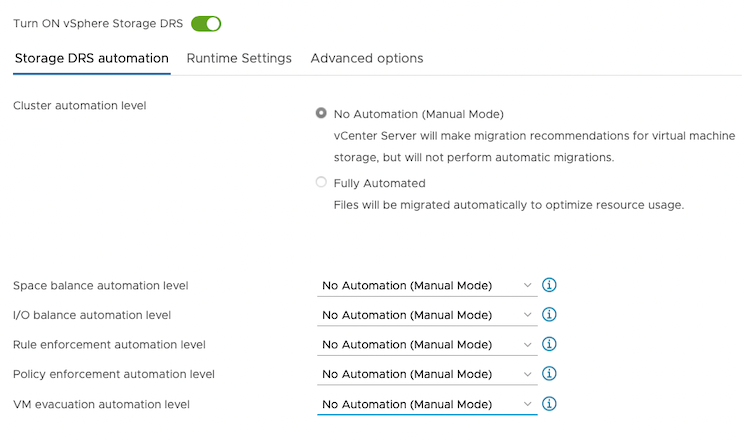

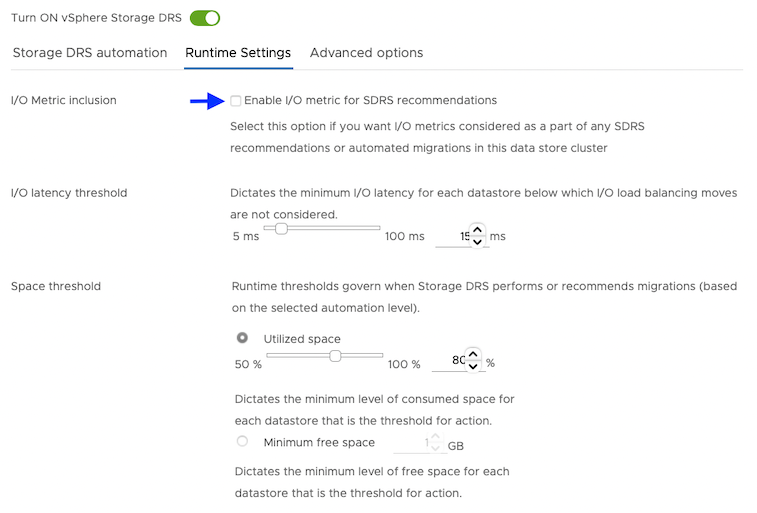

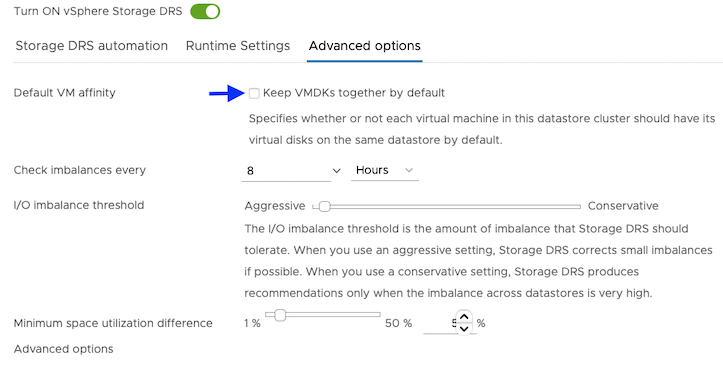

From the Edit Storage DRS Settings window of your selected datastore cluster, edit the following settings:

-

For Storage DRS automation, choose the No Automation (Manual Mode) option, and set the same for other settings, as shown in the following screencapture:

-

For Runtime Settings, clear the Enable I/O metric for SDRS recommendations option.

-

For Advanced options, clear the Keep VMDKs together by default options.

Grant the required cloud permissions

Grant permissions Portworx requires by creating a secret with user credentials:

Provide Portworx with a vCenter server user that has the following minimum vSphere privileges at vCenter datacenter level using your vSphere console:

-

Datastore

- Allocate space

- Browse datastore

- Low level file operations

- Remove file

-

Host

- Local operations

- Reconfigure virtual machine

-

Virtual machine

- Change Configuration

- Add existing disk

- Add new disk

- Add or remove device

- Advanced configuration

- Change Settings

- Extend virtual disk

- Modify device settings

- Remove disk

If you create a custom role as above, make sure to select Propagate to children when assigning the user to the role.

Why selectPropagate to Children?In vSphere, resources are organized hierarchically. By selecting "Propagate to Children," you ensure that the permissions granted to the custom role are automatically applied not just to the targeted object, but also to all objects within its sub-tree. This includes VMs, datastores, networks, and other resources nested under the selected resource.

-

Create a secret using the following template. Retrieve the credentials from your own environment and specify them under the

datasection:apiVersion: v1

kind: Secret

metadata:

name: px-vsphere-secret

namespace: portworx

type: Opaque

data:

VSPHERE_USER: <your-vcenter-server-user>

VSPHERE_PASSWORD: <your-vcenter-server-password>-

VSPHERE_USER: to find your base64-encoded vSphere user, enter the following command:

echo '<vcenter-server-user>' | base64 -

VSPHERE_PASSWORD: to find your base64-encoded vSphere password, enter the following command:

echo '<vcenter-server-password>' | base64

Once you've updated the template with your user and password, apply the spec:

oc apply -f <your-spec-name> -

-

Ensure ports 17001-17020 on worker nodes are reachable from the control plane node and other worker nodes.

Deploy Portworx using Helm

By default, Portworx is installed in the kube-system namespace. If you want to install it in a different namespace, use the -n <namespace> flag. For this example we will deploy Portworx in the portworx namespace.

-

To install Portworx, add the

portworx/helmrepository to your local Helm repository.helm repo add portworx https://raw.githubusercontent.com/portworx/helm/master/stable/"portworx" has been added to your repositories -

Verify that the repository has been successfully added.

helm repo listNAME URL

portworx https://raw.githubusercontent.com/portworx/helm/master/stable/ -

Create a

px_install_values.yamlfile and add the following parameters.openshiftInstall: true

drives: 'type=thin,size=150'

envs:

- name: VSPHERE_INSECURE

value: 'true'

- name: VSPHERE_USER

valueFrom:

secretKeyRef: null

name: px-vsphere-secret

key: VSPHERE_USER

- name: VSPHERE_PASSWORD

valueFrom:

secretKeyRef: null

name: px-vsphere-secret

key: VSPHERE_PASSWORD

- name: VSPHERE_VCENTER

value: <your-vCenter-Endpoint>

- name: VSPHERE_VCENTER_PORT

value: <your-vCenter-Port>

- name: VSPHERE_DATASTORE_PREFIX

value: <your-vCenter-Datastore-prefix>

- name: VSPHERE_INSTALL_MODE

value: shared -

(Optional) In many cases, you may want to customize Portworx configurations, such as enabling monitoring or specifying specific storage devices. You can pass the custom configuration to the

px_install_values.yamlyaml file.note- You can refer to the Portworx Helm chart parameters for a list of configurable parameters and values.yaml file for configuration file template.

- The default clusterName is

mycluster. However, it's recommended to change it to a unique identifier to avoid conflicts in multi-cluster environments.

-

Install Portworx Enterprise using the following command:

noteTo install a specific version of Helm chart, you can use the

--versionflag. Example:helm install <px-release> portworx/portworx --version <helm-chart-version>.helm install <px-release> portworx/portworx -n <portworx> -f px_install_values.yaml --debug -

You can check the status of your Portworx installation.

helm status <px-release> -n <portworx>NAME: px-release

LAST DEPLOYED: Thu Sep 26 05:53:17 2024

NAMESPACE: portworx

STATUS: deployed

REVISION: 1

TEST SUITE: None

NOTES:

Your Release is named "px-release"

Portworx Pods should be running on each node in your cluster.

Portworx would create a unified pool of the disks attached to your Kubernetes nodes.

No further action should be required and you are ready to consume Portworx Volumes as part of your application data requirements.

Verify your Portworx installation

Once you've installed Portworx, you can perform the following tasks to verify that Portworx has installed correctly.

Verify if all pods are running

Enter the following oc get pods command to list and filter the results for Portworx pods:

oc get pods -n portworx -o wide | grep -e portworx -e px

portworx-api-774c2 1/1 Running 0 2m55s 192.168.121.196 username-k8s1-node0 <none> <none>

portworx-api-t4lf9 1/1 Running 0 2m55s 192.168.121.99 username-k8s1-node1 <none> <none>

portworx-kvdb-94bpk 1/1 Running 0 4s 192.168.121.196 username-k8s1-node0 <none> <none>

portworx-operator-xxxx-xxxxxxxxxxxxx 1/1 Running 0 4m1s 10.244.1.99 username-k8s1-node0 <none> <none>

prometheus-px-prometheus-0 2/2 Running 0 2m41s 10.244.1.105 username-k8s1-node0 <none> <none>

px-cluster-1c3edc42-4541-48fc-b173-xxxx-xxxxxxxxxxxxx 2/2 Running 0 2m55s 192.168.121.196 username-k8s1-node0 <none> <none>

px-cluster-1c3edc42-4541-48fc-b173-xxxx-xxxxxxxxxxxxx 1/2 Running 0 2m55s 192.168.121.99 username-k8s1-node1 <none> <none>

px-csi-ext-868fcb9fc6-xxxxx 4/4 Running 0 3m5s 10.244.1.103 username-k8s1-node0 <none> <none>

px-csi-ext-868fcb9fc6-xxxxx 4/4 Running 0 3m5s 10.244.1.102 username-k8s1-node0 <none> <none>

px-csi-ext-868fcb9fc6-xxxxx 4/4 Running 0 3m5s 10.244.3.107 username-k8s1-node1 <none> <none>

px-prometheus-operator-59b98b5897-xxxxx 1/1 Running 0 3m3s 10.244.1.104 username-k8s1-node0 <none> <none>

Note the name of one of your px-cluster pods. You'll run pxctl commands from these pods in following steps.

Verify Portworx cluster status

You can find the status of the Portworx cluster by running pxctl status commands from a pod. Enter the following oc exec command, specifying the pod name you retrieved in the previous section:

oc exec px-cluster-1c3edc42-4541-48fc-b173-xxxx-xxxxxxxxxxxxx -n portworx -- /opt/pwx/bin/pxctl status

Defaulted container "portworx" out of: portworx, csi-node-driver-registrar

Status: PX is operational

Telemetry: Disabled or Unhealthy

Metering: Disabled or Unhealthy

License: Trial (expires in 31 days)

Node ID: 788bf810-57c4-4df1-xxxx-xxxxxxxxxxxxx

IP: 192.168.121.99

Local Storage Pool: 1 pool

POOL IO_PRIORITY RAID_LEVEL USABLE USED STATUS ZONE REGION

0 HIGH raid0 3.0 TiB 10 GiB Online default default

Local Storage Devices: 3 devices

Device Path Media Type Size Last-Scan

0:1 /dev/vdb STORAGE_MEDIUM_MAGNETIC 1.0 TiB 14 Jul 22 22:03 UTC

0:2 /dev/vdc STORAGE_MEDIUM_MAGNETIC 1.0 TiB 14 Jul 22 22:03 UTC

0:3 /dev/vdd STORAGE_MEDIUM_MAGNETIC 1.0 TiB 14 Jul 22 22:03 UTC

* Internal kvdb on this node is sharing this storage device /dev/vdc to store its data.

total - 3.0 TiB

Cache Devices:

* No cache devices

Cluster Summary

Cluster ID: px-cluster-1c3edc42-xxxx-xxxxxxxxxxxxx

Cluster UUID: 33a82fe9-d93b-435b-xxxx-xxxxxxxxxxxxx

Scheduler: kubernetes

Nodes: 2 node(s) with storage (2 online)

IP ID SchedulerNodeName Auth StorageNode Used Capacity Status StorageStatus Version Kernel OS

192.168.121.196 f6d87392-81f4-459a-xxxx-xxxxxxxxxxxxx username-k8s1-node0 Disabled Yes 10 GiB 3.0 TiB Online Up 2.11.0-81faacc 3.10.0-1127.el7.x86_64 CentOS Linux 7 (Core)

192.168.121.99 788bf810-57c4-4df1-xxxx-xxxxxxxxxxxxx username-k8s1-node1 Disabled Yes 10 GiB 3.0 TiB Online Up (This node) 2.11.0-81faacc 3.10.0-1127.el7.x86_64 CentOS Linux 7 (Core)

Global Storage Pool

Total Used : 20 GiB

Total Capacity : 6.0 TiB

The Portworx status will display PX is operational if your cluster is running as intended.

Verify pxctl cluster provision status

-

Find the storage cluster, the status should show as

Online:oc -n portworx get storageclusterNAME CLUSTER UUID STATUS VERSION AGE

px-cluster-1c3edc42-4541-48fc-xxxx-xxxxxxxxxxxxx 33a82fe9-d93b-435b-xxxx-xxxxxxxxxxxx Online 2.11.0 10m -

Find the storage nodes, the statuses should show as

Online:oc -n portworx get storagenodesNAME ID STATUS VERSION AGE

username-k8s1-node0 f6d87392-81f4-459a-xxxx-xxxxxxxxxxxxx Online 2.11.0-81faacc 11m

username-k8s1-node1 788bf810-57c4-4df1-xxxx-xxxxxxxxxxxxx Online 2.11.0-81faacc 11m -

Verify the Portworx cluster provision status . Enter the following

oc execcommand, specifying the pod name you retrieved in the previous section:oc exec px-cluster-1c3edc42-4541-48fc-b173-xxxx-xxxxxxxxxxxxx -n portworx -- /opt/pwx/bin/pxctl cluster provision-statusDefaulted container "portworx" out of: portworx, csi-node-driver-registrar

NODE NODE STATUS POOL POOL STATUS IO_PRIORITY SIZE AVAILABLE USED PROVISIONED ZONE REGION RACK

788bf810-57c4-4df1-xxxx-xxxxxxxxxxxx Up 0 ( 96e7ff01-fcff-4715-xxxx-xxxxxxxxxxxx ) Online HIGH 3.0 TiB 3.0 TiB 10 GiB 0 B default default default

f6d87392-81f4-459a-xxxx-xxxxxxxxx Up 0 ( e06386e7-b769-xxxx-xxxxxxxxxxxxx ) Online HIGH 3.0 TiB 3.0 TiB 10 GiB 0 B default default default

Create your first PVC

For your apps to use persistent volumes powered by Portworx, you must use a StorageClass that references Portworx as the provisioner. Portworx includes a number of default StorageClasses, which you can reference with PersistentVolumeClaims (PVCs) you create. For a more general overview of how storage works within Kubernetes, refer to the Persistent Volumes section of the Kubernetes documentation.

Perform the following steps to create a PVC:

-

Create a PVC referencing the

px-csi-dbdefault StorageClass and save the file:kind: PersistentVolumeClaim

apiVersion: v1

metadata:

name: px-check-pvc

spec:

storageClassName: px-csi-db

accessModes:

- ReadWriteOnce

resources:

requests:

storage: 2Gi -

Run the

oc applycommand to create a PVC:oc apply -f <your-pvc-name>.yamlpersistentvolumeclaim/px-check-pvc created

Verify your StorageClass and PVC

-

Enter the following

oc get storageclasscommand, specify the name of the StorageClass you created in the steps above:oc get storageclass <your-storageclass-name>NAME PROVISIONER RECLAIMPOLICY VOLUMEBINDINGMODE ALLOWVOLUMEEXPANSION AGE

px-csi-db pxd.portworx.com Delete Immediate false 24mocwill return details about your storageClass if it was created correctly. Verify the configuration details appear as you intended. -

Enter the

oc get pvccommand, if this is the only StorageClass and PVC you've created, you should see only one entry in the output:oc get pvc <your-pvc-name>NAME STATUS VOLUME CAPACITY ACCESS MODES STORAGECLASS AGE

px-check-pvc Bound pvc-dce346e8-ff02-4dfb-xxxx-xxxxxxxxxxxxx 2Gi RWO example-storageclass 3m7socwill return details about your PVC if it was created correctly. Verify the configuration details appear as you intended.

Update Portworx Configuration using Helm

If you need to update the configuration of Portworx, you can modify the parameters in the px_install_values.yaml file specified during the Helm installation. This allows you to change the values of configuration parameters.

-

Create or edit the

px_install_values.yamlfile to update the desired parameters.vim px_install_values.yamlmonitoring:

telemetry: false

grafana: true -

Apply the changes using the following command:

helm upgrade <px-release> portworx/portworx -n <portworx> -f px_install_values.yamlRelease "px-release" has been upgraded. Happy Helming!

NAME: px-release

LAST DEPLOYED: Thu Sep 26 06:42:20 2024

NAMESPACE: portworx

STATUS: deployed

REVISION: 2

TEST SUITE: None

NOTES:

Your Release is named "px-release"

Portworx Pods should be running on each node in your cluster.

Portworx would create a unified pool of the disks attached to your Kubernetes nodes.

No further action should be required and you are ready to consume Portworx Volumes as part of your application data requirements. -

Verify that the new values have taken effect.

helm get values <px-release> -n <portworx>You should see all the custom configurations passed using the

px_install_values.yamlfile.