Concepts

Overview

MySQL is a popular, open-source relational database management system. With PDS, users can leverage core capabilities of Kubernetes to deploy and manage their MySQL deployments using a cloud-native, container-based model.

PDS follows the release lifecycle of the upstream MySQL project, meaning new releases are available on PDS shortly after they have become GA. Likewise, older versions of MySQL are removed once they have reached end-of-life. The list of currently supported MySQL versions can be found here.

Similar to all data services in PDS, MySQL deployments run within Kubernetes. PDS includes a component called the PDS Deployments Operator which manages the deployment of all PDS data services, including MySQL. The operator extends the functionality of Kubernetes by implementing a custom resource called mysql. This resource type represents a MySQL instance allowing standard Kubernetes tools to be used to manage MySQL deployments, including scaling, monitoring, and upgrading.

You can learn more about the PDS architecture here.

Clustering

Within PDS, MySQL is deployed using InnoDB Cluster, which wraps MySQL’s native Group Replication capabilities. This allows MySQL to be deployed in a fault-tolerant, highly available manner.

Although PDS allows MySQL to be deployed with any number of nodes, high availability can only be achieved when running with three or more nodes. Smaller clusters should only be considered in development environments. When using multi-node MySQL deployments, one node is elected as the primary and can service write requests; all other nodes will run as secondaries and can service read requests. In the event that the primary node fails, an automatic election process promotes an eligible secondary to serve as the primary node. PDS deploys MySQL Router alongside MySQL, allowing PDS to provide endpoints that ensure client requests are automatically routed to the to the correct database node(s).

When deployed as a multi-node cluster, individual nodes, deployed as pods within a statefulSet, automatically discover each other to form a cluster. Node discovery within a MySQL cluster is also automatic when pods are deleted and recreated or when additional nodes are added to the cluster via horizontal scaling.

PDS leverages the fault tolerance of MySQL’s native clustering capabilities by spreading MySQL servers across Kubernetes worker nodes when possible. PDS utilizes the Stork, in combination with Kubernetes storageClasses, to intelligently schedule pods. By provisioning storage from different worker nodes, and then scheduling pods to be hyper-converged with the volumes, PDS deploys MySQL clusters in a way that maximizes fault tolerance, even if entire worker nodes or availability zones are impacted.

Refer to the PDS architecture to learn more about Stork and scheduling.

Replication

Application Replication

When deployed as a multi-node cluster, one node is elected as the cluster’s primary node; every other node in the deployment will serve as a read replica and will, thus, maintain a copy of the data.

Application replication via MySQL InnoDB Cluster is used to achieve high availability and can be leveraged to distribute read workloads. With data replicated to multiple nodes, more nodes are able to respond to read requests for data. Likewise, in the event of primary node failure, an eligible secondary node can be promoted to become the cluster’s new primary node. Note that additional nodes will not enhance write performance since only one node, the elected primary, can service write requests; consider vertical scaling when additional write performance is required.

Storage Replication

PDS takes data replication further. Storage in PDS is provided by Portworx Enterprise, which itself allows data to be replicated at the storage level.

Each MySQL server in PDS is configured to store data to a persistentVolume which is provisioned by Portworx Enterprise. These Portworx volumes can, in turn, be configured to replicate data to multiple volume replicas. It is recommended to use two volume replicas in PDS in combination with application replication in MySQL.

While the additional level of replication will result in write amplification, the storage-level replication solves a different problem than what is accomplished by application-level replication. Specifically, storage-level replication reduces the amount of downtime in failure scenarios. That is, it reduces RTO.

Portworx volume replicas are able to ensure that data are replicated to different Kubernetes worker nodes or even different availability zones. This maximizes the ability of the Kubernetes API scheduler to schedule MySQL pods.

For example, in cases where Kubernetes worker nodes are unschedulable, pods can be scheduled on other worker nodes where data already exist. Moreover, pods can start instantly and service traffic immediately without waiting for MySQL to replicate data to the pod.

Reference Architecture

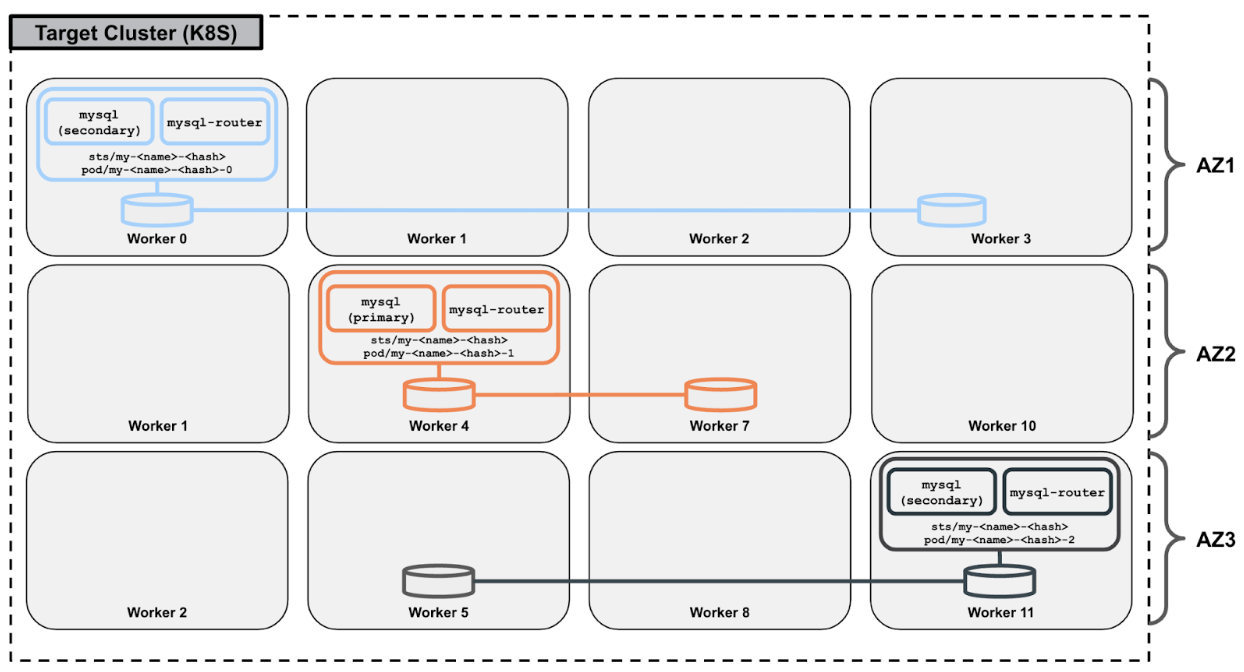

The following image illustrates the availability zone-aware MySQL and Portworx storage topology:

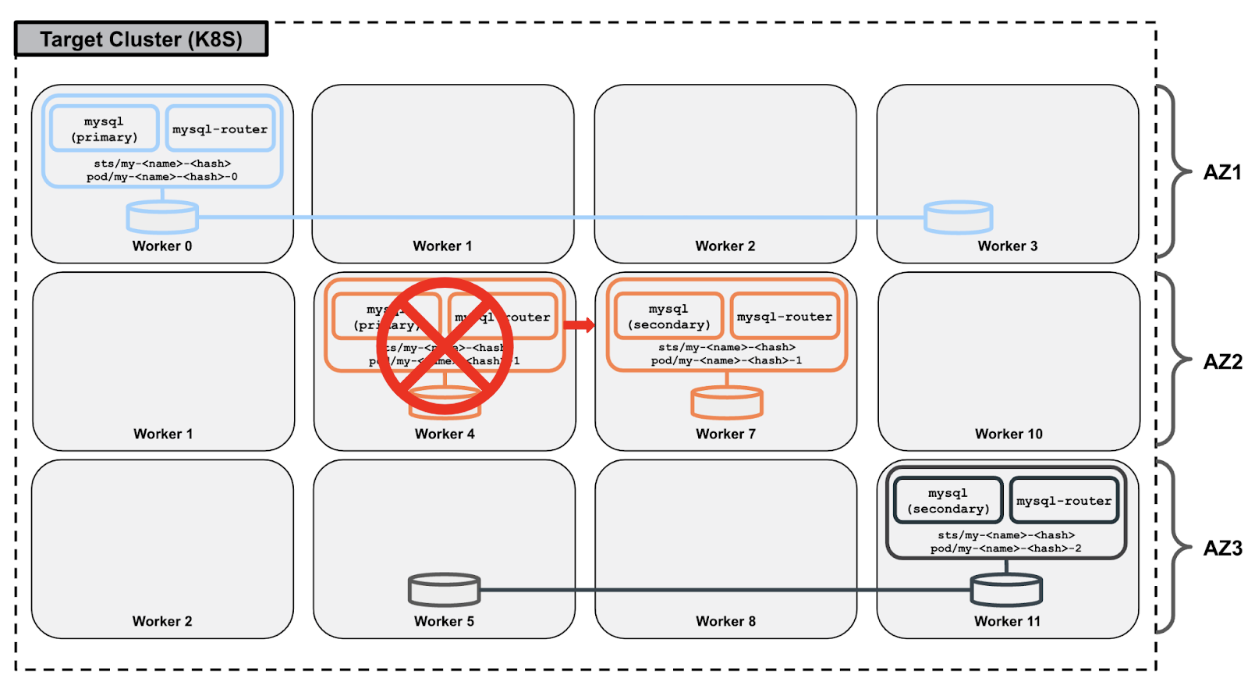

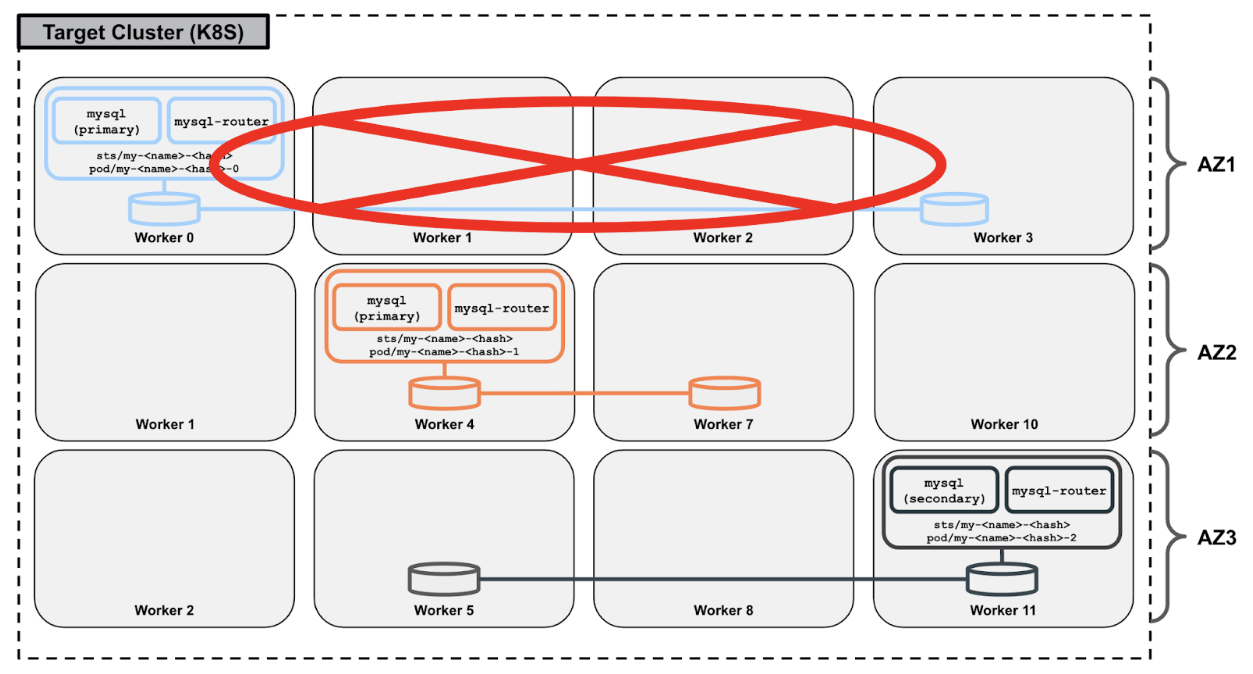

A host and/or storage failure results in the immediate rescheduling of the MySQL server to a different worker node within the same availability zone. Stork can intelligently select the new worker node during the rescheduling process such that it is a worker node that contains a replica of the MySQL server’s underlying Portworx volume, maintaining database replication while ensuring hyperconvergence for optimal performance:

Additionally, in the event that an entire availability zone is unavailable, MySQL’s native clustering capability via InnoDB Cluster ensures that client applications are not impacted.

Configuration

MySQL server configurations can be tuned by specifying setting-specific environment variables within the deployment’s application configuration template.

You can learn more about application configuration templates here. The list of all MySQL server configurations that may be overridden is itemized in the MySQL service’s reference documentation here.

Scaling

Because of the ease with which databases can be deployed and managed in PDS, it is common for customers to deploy many of them. Likewise, because it is easy to scale databases in PDS, it is common for customers to start with smaller clusters and then add resources when needed. PDS supports both vertical scaling (i.e., CPU/memory), as well as horizontal scaling (i.e., nodes) of MySQL clusters.

Vertical Scaling

Vertical scaling refers to the process of adding hardware resources to (scaling up) or removing hardware resources from (scaling down) database nodes. In the context of Kubernetes and PDS, these hardware resources are virtualized CPU and memory.

PDS allows MySQL servers to be dynamically reprovisioned with additional or reduced CPU and/or memory. These changes are applied in a rolling fashion across each of the nodes in the MySQL cluster. For highly-available MySQL clusters with three or more nodes, this operation is non-disruptive to client applications.

Horizontal Scaling

Horizontal scaling refers to the process of adding database nodes to (scaling out) or removing database nodes from (scaling in) a cluster. Currently, PDS supports only scaling out of clusters. This is accomplished by adding additional pods to the existing MySQL cluster by updating the replica count of the statefulSet.

For MySQL, adding nodes equates to adding additional standby nodes, which can serve as read replicas. Thus, horizontal scaling can be used as a means to scaling a deployment's read capacity, by distributing read workloads over a large number of nodes.

Connectivity

PDS manages pod-specific services whose type is determined based on user input (currently, only LoadBalancer and ClusterIP types are supported). This ensures a stable IP address beyond the lifecycle of the pod. For each of these services, an accompanying DNS record will be managed automatically by PDS. Connection information provided through the PDS UI will reflect this by providing users with an array of MySQL server endpoints. Specifying some or all individual server endpoints in client configurations will distribute client requests and mitigate connectivity issues in the event of any individual server being unavailable.

PDS will create a default administrator user called pds which can be used to connect to the database initially. This user can be used to create additional users and can be dropped if needed. Instance-specific authentication information, including the pds user’s password, can be retrieved from the PDS UI within the instance’s connection details page.

Backup and Restore

Backup and restore functionality for MySQL in PDS is enabled by Percona XtraBackup, an open-source backup utility for MySQL. Backups can be taken ad hoc or can be performed on a schedule.

To learn more about ad hoc and scheduled backups in PDS, refer to the PDS backups docs.

Backups

Each MySQL deployment in PDS is bundled with Percona Xtrabackup. Xtrabackup is configured to take a backup of all databases within the MySQL deployment and to store the data to a dedicated Portworx volume.

The backup volume is shared across all nodes in the MySQL deployment. By using a dedicated volume, the backup process does not interfere with the performance of the database.

Once Xtrabackup has completed the backup, the PDS Backup Operator makes a copy of the volume to a remote object store. This feature, which is provided by Portworx Enterprise’s cloud snapshot functionality, allows PDS to fulfill the 3-2-1 backup strategy – three copies of data on two types of storage media with one copy offsite.

Restore

Restoring MySQL in PDS is done out-of-place. That is to say, PDS will deploy a new MySQL cluster and restore the data to the new cluster. This prevents users from accidentally overwriting data and allows users to stand up multiple copies of their databases for debugging or forensic purposes.

PDS does not currently support restoration of specific databases or tables.

As with restoring any database, loss of data is likely to occur. Because user passwords are stored within the database, restoring from backup will revert any password changes. Therefore, in order to ensure access to the database, users must manage their own credentials.

Monitoring

With each MySQL server, PDS bundles a Prometheus exporter for monitoring purposes. This exporter is provided by the Prometheus community and makes MySQL metrics available in the Prometheus text-based format. For a full list of metrics, see the reference documentation for MySQL.

The metrics captured by PDS are available for export from the PDS Control Plane. A Prometheus endpoint is available for use with standard tools such as Grafana. For more information about monitoring and data visualization of metrics, see the PDS Platform documentation.